About Me

Robotics Team Lead and Systems Architect with 8+ years building production-grade service robots. Specialized in ROS2-based autonomy stacks, perception, navigation, embedded systems, and AI integration. Proven experience owning full robotics architectures — from low-level firmware and real-time communication to high-level autonomy and cloud connectivity — while leading multi-disciplinary teams to deliver commercial robotic products.

Barista Project Lead Architect

Designed and implemented the full system architecture for a multi-machine automated barista solution, integrating ROS2 behavior trees, RS485+CAN communication, and cloud-based facial recognition.

Cursor Hackathon Heilbronn

30 hours Hackarthon to develop AI based solutions, Mainly Focused on building RoboScribe Natural Language Policy Validation & Data Collection for Humanoid Robots

Television Public Speaker & Robotics Industry Expert

Featured as a robotics expert on television, discussing humanoid robotics, AI integration, and the future of intelligent machines in healthcare and daily life.

AI-Powered Robotics Integration (SMART Customer Support Bank Agent)

Developed an AI-driven assistant deployed on Duet service robot with advanced navigation, perception, and multi-modal interaction.

Warehouse Automation Success

Architected and implemented the MB90a logistics robot software from scratch using ROS2, CAN protocols, and holonomic motion control.

Advanced Navigation Solutions

Implemented hybrid 2D/3D mapping and navigation combining Cartographer, MPC, and Open3D for dynamic obstacle handling.

System Architecture and Design

Designed and implemented complex robotics systems for various projects with complex algorithms, multi-sensor fusion, behavior trees, and real-time communication protocols.

Leadership in Robotics Innovation

Built and led multi-disciplinary teams delivering 30+ robotics projects and 8 distinct robot models across industries.

Pioneer in Commercial Robotics in MENA Region

Created one of the first restaurant delivery robot product in the region during early career at MARSES Robotics.

Programming Languages

Robotics & Embedded Systems

DevOps & Infrastructure

Perception & Navigation

AI & Machine Learning

Databases

Job Roles & Responsibilities

Detailed overview of my professional experience and key responsibilities

2024 - Present

California, USA (Remote)

- •Designed and developed complete software architecture for a robotic kitchen automation system.

- •Integrated microcontroller solutions (ARM Cortex-M, STM32) with CAN & RS485 protocols.

- •Simulating solution on Gazebo before mechanical integration

- •Led ROS2-based communication and orchestration design.

- •Developed motion planning pipelines using MoveIt2, OMPL, and CHOMP planners.

- •Implemented 3D Octomap-based perception for object detection and avoidance.

- •Architected OpenArm haptic teleoperation system enabling bilateral control over networks with 1000Hz feedback loops, synchronized data pipelines for imitation learning using GStreamer/NVENC, and automated quality validation for AI training datasets.

2020 - 2024

Dubai, UAE

- •Led a cross-functional team of robotics engineers, developers, and installation specialists.

- •Designed AI-powered customer support agents using LLMs (LLaMA3, ChatGPT4, Qwen3) with STT/TTS and data synthesis for improved interactivity.

- •Architected end-to-end mapping, localization, and 3D perception pipelines with multi-sensor fusion (LiDAR, IMU, Cameras) and SLAM integration.

- •Developed multi-robot fleet management systems with advanced perception and autonomous navigation using ROS behavior trees.

- •Implemented Agile processes, streamlining workflows and reducing delivery time by 30%.

- •Integrated real-time communication systems using WebSockets, ProtoBuf, and APIs.

- •Oversaw Android app & Web app development for customer-facing robotics interfaces.

- •Implemented RS485+CAN communication for multi-controller machine orchestration.

- •Established robotics technology adoption strategy through market analysis.

- •Coordinated hardware-software integration with mechanical teams.

- •Delivered over 30 robotics projects and 8 unique product lines.

- •Used Docker for containerized deployments and implemented CI/CD pipelines for automated testing and delivery.

- •Managed Git-based version control and branching strategies for multi-team collaboration.

2017 - 2020

Cairo, Egypt

- •Developed first proof-of-concept delivery robot for restaurants.

- •Developed autonomous mapping and localization using ROS.

- •Conducted R&D resulting in 4 new product lines.

- •Programmed robots using ROS, C++, Python, and Embedded C.

- •Established the company's software team architecture.

- •Optimized installation processes and designed custom Linux-based image, reducing installation time by 30%.

- •Modeled motion control in MATLAB and Simulink.

Featured Projects

A showcase of my recent work and personal projects

Designed and implemented an AI-driven virtual assistant for banks using LLMs, STT/TTS, Cartographer navigation, MPC planning, Open3D-based 3D perception, IMU-odometry fusion, and WebSocket APIs to create a multilingual, interactive bank assistant.

Built from scratch with autonomous mapping, localization, and perception to achieve 99.9% sanitation accuracy in COVID-19-infected environments.

Increased logistics efficiency by 35% through automated navigation and holonomic motion control.

Duet is a friendly interactive expressive mobile robot designed to work as a standalone promoter who can welcome guests, guide them, conduct conversations, check in/out, print & scan documents, and provides information in an attractive way using changeable facial expressions.

- Developed the autonomous navigation, perception, and control software for a dry fog disinfection robot capable of atomizing various disinfectants into a super dry mist for high-speed airflow sanitization.

- Integrated mapping, localization, and obstacle avoidance algorithms to enable the robot to autonomously reach target areas and achieve full 360° disinfection coverage.

- Designed the control logic for dry fog spraying nozzles to ensure precise and efficient air purification.

- Implemented ROS-based task planning to coordinate navigation and spraying cycles, optimizing both coverage and operating time.

- Developed the complete robotics software stack for Rolly, an Autonomous Mobile Robot designed for secure, non-contact deliveries in multi-environment setups.

- Implemented fleet management capabilities, enabling multiple robots to operate seamlessly without collisions or interference.

- Integrated navigation for complex indoor routes, including BMS integration to automatically close and open doors.

- Designed human-robot interaction systems with custom touchscreen interface and application layer tailored to each client's needs.

- Implemented ROS-based autonomous navigation, obstacle avoidance, and task orchestration to ensure reliability, safety, and scalability.

Developed as part of MARSES' POC robotics solutions for restaurants, with smart waiter capabilities, payment gateway redirecting, customer satisfaction feedback survey, menu ordering and delivery

Developing a centralized platform for monitoring, controlling, and optimizing multi-robot operations in warehouses and public spaces.

Currently Working On

My latest projects and ongoing initiatives

- Designed RS485+CAN communication to communicate between over 24 microcontrollers,

- Implemented ROS2 behavior trees to orchestrate beverage creation to achieve 60+ beverage types.

- Used Lifecycle nodes and actions to maintain system stability while handling failures and edge cases.

- Designed & Implemented Cloud-based facial recognition to have an interactive approach to the customer.

- Oversaw the development and contributed in handling a queuing system capable of forecasting stock levels and machines idle time,

- Designed Inventory and stock management system.

- Integrated POS system, achieving a fully automated barista system capable of delivering 600+ beverages per day.

Progress

95%

- Used MoveIt2, OMPL, CHOMP planners and Octomap for 3D detection,

- Working with 4 DOF system in a tight space to deliver from display area to delivery area,

- Interacting with stock database to ensure the system is always ready to deliver while fetching nearest item and tracking expiry dates.

Progress

80%

- Architected a distributed teleoperation framework enabling 1:1 haptic coupling over VPN-secured networks.

- Developed a 1000Hz bilateral control loop using a custom UDP transport layer, implementing startup synchronization and smoothing to prevent torque spikes during remote handshakes.

- To facilitate Imitation Learning, engineered a high-performance data pipeline using Shared Memory (SHM) ring buffers and GStreamer/NVENC, achieving sub-10ms synchronization across four HD camera streams (including OAK-D Depth) with minimal CPU overhead.

- Established automated quality gates, validating 500Hz joint-state telemetry against 30Hz visual observations to ensure nanosecond-precision alignment for training end-to-end policies like ACT and Diffusion Policy.

Progress

90%

- Developed control, navigation, and perception for a semi-humanoid robot platform.

- Controlling a 10 DOF system and fingers, Using Stereo cameras for 3D perception and 3D pose estimation, Built a robust asynchronous architecture for motor control enabling easy integration with MoveIt

Progress

60%

Migrated Stanford's Universal Manipulation Interface to GoPro 13 + Maxlens v2.0 (177° FOV), requiring complete sensor recalibration and SLAM pipeline adaptation.

Completed Work:

- Performed Kannala-Brandt fisheye calibration with sub-pixel reprojection accuracy

- Characterized IMU noise parameters via Allan deviation for Visual-Inertial Odometry

- Built Foxglove Studio visualization pipeline for validating SLAM trajectories and sensor alignment via MCAP format

- Fixed critical timestamp synchronization in multi-camera capture infrastructure

- Validated end-to-end data flow from raw video to Zarr training datasets

Next Steps:

- Isaac Sim Integration: Converting training pipeline to robomimic-compatible HDF5 format for simulation-based policy training and domain randomization

- Sim-to-Real Validation: Validating trained policies in simulation before real-world deployment on UR5/Franka hardware

Progress

50%

- Built a ROS/Gazebo pipeline that automatically tunes local navigation parameters (e.g., costmap inflation radius and cost scaling factor) via a genetic algorithm.

- The system launches navigation goals through Locomotor/DWB, live-updates parameters using dynamic_reconfigure, and evaluates each candidate with multi-objective fitness: time-to-goal, collision penalties (Gazebo contact sensors), and front/side local-cost metrics sampled from the costmap.

- Runs iterative generations with elitism, crossover, and mutation, resetting the world and robot pose between trials.

- Includes goal progress monitoring via actionlib feedback, AMCL/odometry hooks, and automatic cancellation/recovery on timeouts or failure states.

- Results are serialized for offline analysis.

Progress

50%

Research & Development

Exploring cutting-edge technologies and innovative solutions

30 hours Hackarthon to develop AI based solutions, Mainly Focused on building RoboScribe Natural Language Policy Validation & Data Collection for Humanoid Robots

- A natural language robotics interface that converts spoken or typed commands into executable humanoid robot motions inside NVIDIA Isaac Sim, enabling hands-free control and dataset generation. :contentReference[oaicite:0]{index=0}

- Integrates LLMs (DeepSeek via Featherless API) for command parsing and vision-language models (Qwen3-VL-2B) for object-aware navigation using robot camera input. :contentReference[oaicite:1]{index=1}

- Built as a full-stack system with a FastAPI backend, Next.js dashboard, and Isaac Sim extension, communicating via WebSockets for real-time control. :contentReference[oaicite:2]{index=2}

- Supports voice interaction (ElevenLabs STT/TTS), trajectory recording at 200Hz, and exporting robot motion data for learning or validation tasks. :contentReference[oaicite:3]{index=3}

- Designed to validate and analyze robot policies by comparing natural-language-driven actions with simulated execution in a humanoid robot environment. :contentReference[oaicite:4]{index=4}

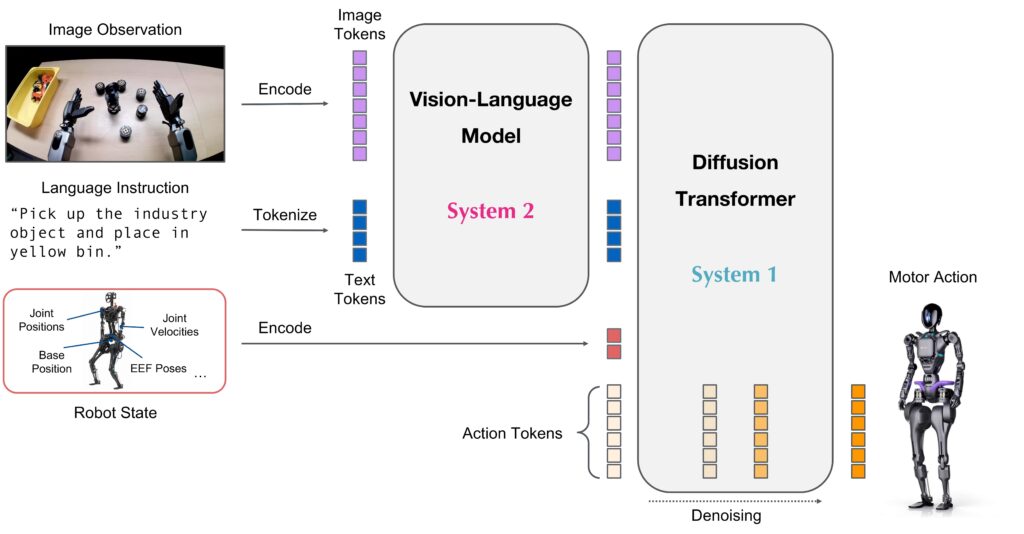

Researching end-to-end architectures that unify vision-language encoders with LLM backbones (e.g., Llama-2) to enable robots to 'speak' the language of motor commands.

- Researching end-to-end architectures that unify vision-language encoders with LLM backbones (e.g., Llama-2) to enable robots to 'speak' the language of motor commands.

- This paradigm utilizes internet-scale pre-training through encoders like SigLIP and DINOv2 to grant robots semantic reasoning and zero-shot generalization to novel tasks.

- The system leverages the Open X-Embodiment dataset—comprising 1M+ trajectories across 22 robot types—to achieve cross-embodiment capabilities.

- My research explores the trade-offs between discrete autoregressive tokenization (RT-2, OpenVLA) and continuous generative policies like Diffusion (Octo) or Flow Matching (pi-zero), alongside optimization strategies like FAST tokenization for real-time edge deployment on mobile platforms.

Combining 3D clustering, object pose estimation, and YOLO detection for enriched local perception.

- Developing a multi-sensor 3D perception pipeline that fuses depth/LiDAR point clouds with RGB camera data.

- Point clouds are processed in Open3D for voxel filtering, clustering (e.g., DBSCAN), and object pose estimation using RANSAC/ICP.

- YOLO-based object detection runs on synchronized RGB frames, with detected classes associated to 3D clusters to add semantic labels.

- Final results are projected into a local perception map and published to the navigation stack, enabling both geometric and semantic reasoning for obstacle avoidance, path planning, and behavior selection.

- The system integrates SLAM-based localization with TF2 to ensure consistent transforms across all data sources.

Enhancing perception accuracy through fusion of LiDAR, IMU, and 3D camera data.

Personal R&D in autonomous driving.

Tested Autoware with HD maps and implemented MPC navigation control.

Using NVIDIA Isaac and Isaac Sim for high-fidelity simulation, robot testing, and reinforcement learning policy training in virtual environments before deployment.

Using PX4 autopilot to integrate with 3D obstacle avoidance and trajectory planning based on BSpline

Events

A showcase of Events I contributed in

- In this interview, I talked about how quickly humanoid robotics is evolving, especially in terms of sensory capabilities.

- I explained that robots don’t actually see like humans — they rely on sensors, algorithms, and reinforcement learning to interpret their environment and mimic natural movement.

- We also discussed the recent robot showcases in China.

- While they look impressive, I clarified that most of those performances are pre-programmed rather than truly autonomous.

- I ended by sharing my perspective on the future of robotics in healthcare and daily life, emphasizing that although AI can simulate emotions, it still lacks real human consciousness and judgment.

- Integrated into the Duet service robot, this AI-driven interactive agent engages with children to assess their hobbies and talents through conversational interaction and guided activities.

- Features include talent demonstration recording, interactive storytelling, digital painting interface, and automated report generation for parents.

- Designed to create an engaging, educational experience while providing actionable insights for talent development.

- Engineered control, perception, and targeting systems for two omni-wheel drive robots showcased in an interactive VR shooting game at Gitex 2023.

- One robot operated autonomously, using fused odometry from wheel encoders, IMU, and camera-based ArUco marker pose estimation for navigation and aiming.

- It autonomously detected and engaged targets by calibrating pitch and yaw mechanisms and dynamically controlling the motorized shooting angle.

- The second robot was manually operated by a human opponent via VR headset and joystick, receiving real-time video feed through WebRTC streaming.

- The combination of precise omni-wheel kinematics, multi-sensor fusion, and integrated targeting systems created an immersive competitive experience between human and autonomous players.

- Developed a real-time interactive installation where a UR10 Universal Robot engages in a hockey-style game by detecting and reacting to a moving puck.

- Implemented trajectory estimation using ArUco markers, camera calibration, and high-speed vision processing to calculate puck speed, position, and future path.

- Designed decision-making logic to classify actions as defensive or offensive, and generated precise 2D plane position commands for the UR10 to execute responsive movements.

- The system was optimized for accuracy, speed, and audience engagement in a live event environment.

- Developed and integrated a control system for a Yumi ABB collaborative robot to synchronize its movements with live music beats in real time.

- The robot was programmed to operate a DJ mixer, perform smooth transitions, creating an engaging and visually synchronized experience for event audiences.

Video Gallery

Watch my robotics projects in action - from live demonstrations to technical deep-dives

Project Demonstrations

Projects

Fleet Management System for Robots

Custom multi-robot management software

MDR-C

Duet

MDRA

Mozo

MB90A

MB90A Payload

Mozo Demo

Kitchen Gantry System

Automated gantry for food delivery.

Fails

Academic Journey & Recognition

Foundations and milestones in robotics

Graduation Project - Mobile Service robot based on SLAM Gmapping

2018Helwan University — Mechatronics DepartmentAcademic Projects

ABU Robocon — Japan

2017ABU Robocon International Robotics CompetitionCompetition

ABU Robocon — Indonesia

2015ABU Robocon International Robotics CompetitionCompetition

ABU Robocon — Vietnam

2014ABU Robocon International Robotics CompetitionCompetition

P&G Robo-Competition

2016Procter & Gamble Robotics ChallengeCompetition

University Projects: Self-balancing robot, smart car obstacle avoidance, Maze solver robot using algorithmic pathfinding.

2013–2018Helwan University — Mechatronics DepartmentAcademic Projects

The Studio

Where ideas turn into autonomous reality

"The future is autonomous — I'm just helping get there faster."

Omar Walid